Databricks' dolly-v2-12b vs ggml.ai

Dive into the comparison of Databricks' dolly-v2-12b vs ggml.ai and discover which AI Large Language Model (LLM) tool stands out. We examine alternatives, upvotes, features, reviews, pricing, and beyond.

In a comparison between Databricks' dolly-v2-12b and ggml.ai, which one comes out on top?

When we compare Databricks' dolly-v2-12b and ggml.ai, two exceptional large language model (llm) tools powered by artificial intelligence, and place them side by side, several key similarities and differences come to light. Neither tool takes the lead, as they both have the same upvote count. You can help us determine the winner by casting your vote and tipping the scales in favor of one of the tools.

Does the result make you go "hmm"? Cast your vote and turn that frown upside down!

Databricks' dolly-v2-12b

What is Databricks' dolly-v2-12b?

Databricks introduces dolly-v2-12b, an inventive language model providing high-quality, instruction-following capabilities. This 12-billion-parameter model is built on Pythia-12b, yielding exceptional performance, not inherent in its foundational model. Dolly-v2-12b is optimized for commercial use, having been fine-tuned on a diverse mix of instructions covering areas like brainstorming, classification, and summarization. While it may not be at the forefront of AI models, its proficiency in instruction adherence is remarkable. Available in varying sizes, dolly-v2-12b is complemented by the smaller dolly-v2-7b and dolly-v2-3b models. Users can leverage this model easily with Transformers and PyTorch using the Hugging Face platform, tapping into the practical insights and extensive dataset, databricks-dolly-15k, to power their AI applications.

ggml.ai

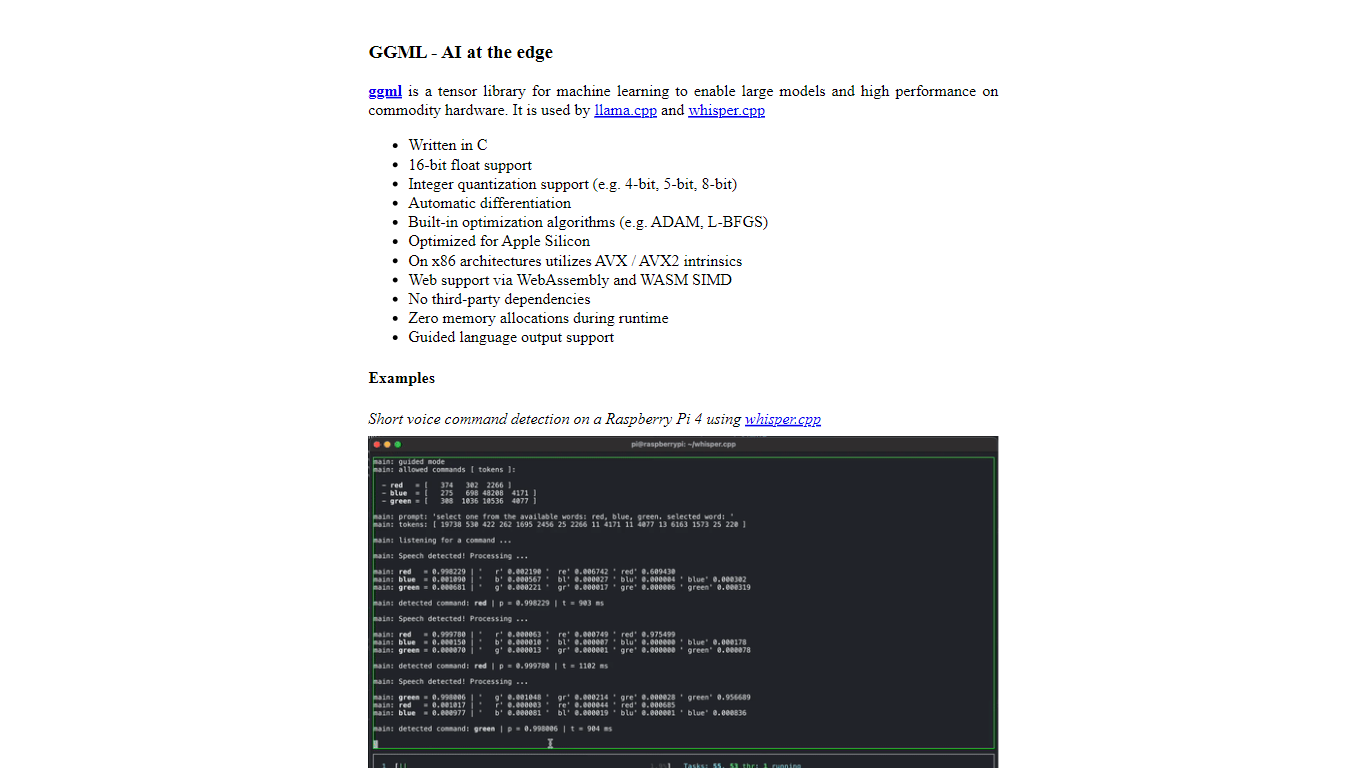

What is ggml.ai?

ggml.ai is at the forefront of AI technology, bringing powerful machine learning capabilities directly to the edge with its innovative tensor library. Built for large model support and high performance on common hardware platforms, ggml.ai enables developers to implement advanced AI algorithms without the need for specialized equipment. The platform, written in the efficient C programming language, offers 16-bit float and integer quantization support, along with automatic differentiation and various built-in optimization algorithms like ADAM and L-BFGS. It boasts optimized performance for Apple Silicon and leverages AVX/AVX2 intrinsics on x86 architectures. Web-based applications can also exploit its capabilities via WebAssembly and WASM SIMD support. With its zero runtime memory allocations and absence of third-party dependencies, ggml.ai presents a minimal and efficient solution for on-device inference.

Projects like whisper.cpp and llama.cpp demonstrate the high-performance inference capabilities of ggml.ai, with whisper.cpp providing speech-to-text solutions and llama.cpp focusing on efficient inference of Meta's LLaMA large language model. Moreover, the company welcomes contributions to its codebase and supports an open-core development model through the MIT license. As ggml.ai continues to expand, it seeks talented full-time developers with a shared vision for on-device inference to join their team.

Designed to push the envelope of AI at the edge, ggml.ai is a testament to the spirit of play and innovation in the AI community.

Databricks' dolly-v2-12b Upvotes

ggml.ai Upvotes

Databricks' dolly-v2-12b Top Features

Large Model Size: Boasts a massive 12 billion parameters for intricate text generation.

Advanced Instruction Following: Exhibits high-quality instruction following, surpassing its foundational model.

Fine-Tuned Dataset: Utilizes the databricks-dolly-15k dataset, comprised of diverse instructive interactions.

Open Science Commitment: Released under a permissive license, dolly-v2-12b furthers the democratization of AI.

Flexible Usage: Supports various GPU configurations and can be seamlessly integrated with Transformers library.

ggml.ai Top Features

Written in C: Ensures high performance and compatibility across a range of platforms.

Optimization for Apple Silicon: Delivers efficient processing and lower latency on Apple devices.

Support for WebAssembly and WASM SIMD: Facilitates web applications to utilize machine learning capabilities.

No Third-Party Dependencies: Makes for an uncluttered codebase and convenient deployment.

Guided Language Output Support: Enhances human-computer interaction with more intuitive AI-generated responses.

Databricks' dolly-v2-12b Category

- Large Language Model (LLM)

ggml.ai Category

- Large Language Model (LLM)

Databricks' dolly-v2-12b Pricing Type

- Freemium

ggml.ai Pricing Type

- Freemium