Last updated 10-23-2025

Category:

Reviews:

Join thousands of AI enthusiasts in the World of AI!

ggml.ai

ggml.ai is at the forefront of AI technology, bringing powerful machine learning capabilities directly to the edge with its innovative tensor library. Built for large model support and high performance on common hardware platforms, ggml.ai enables developers to implement advanced AI algorithms without the need for specialized equipment. The platform, written in the efficient C programming language, offers 16-bit float and integer quantization support, along with automatic differentiation and various built-in optimization algorithms like ADAM and L-BFGS. It boasts optimized performance for Apple Silicon and leverages AVX/AVX2 intrinsics on x86 architectures. Web-based applications can also exploit its capabilities via WebAssembly and WASM SIMD support. With its zero runtime memory allocations and absence of third-party dependencies, ggml.ai presents a minimal and efficient solution for on-device inference.

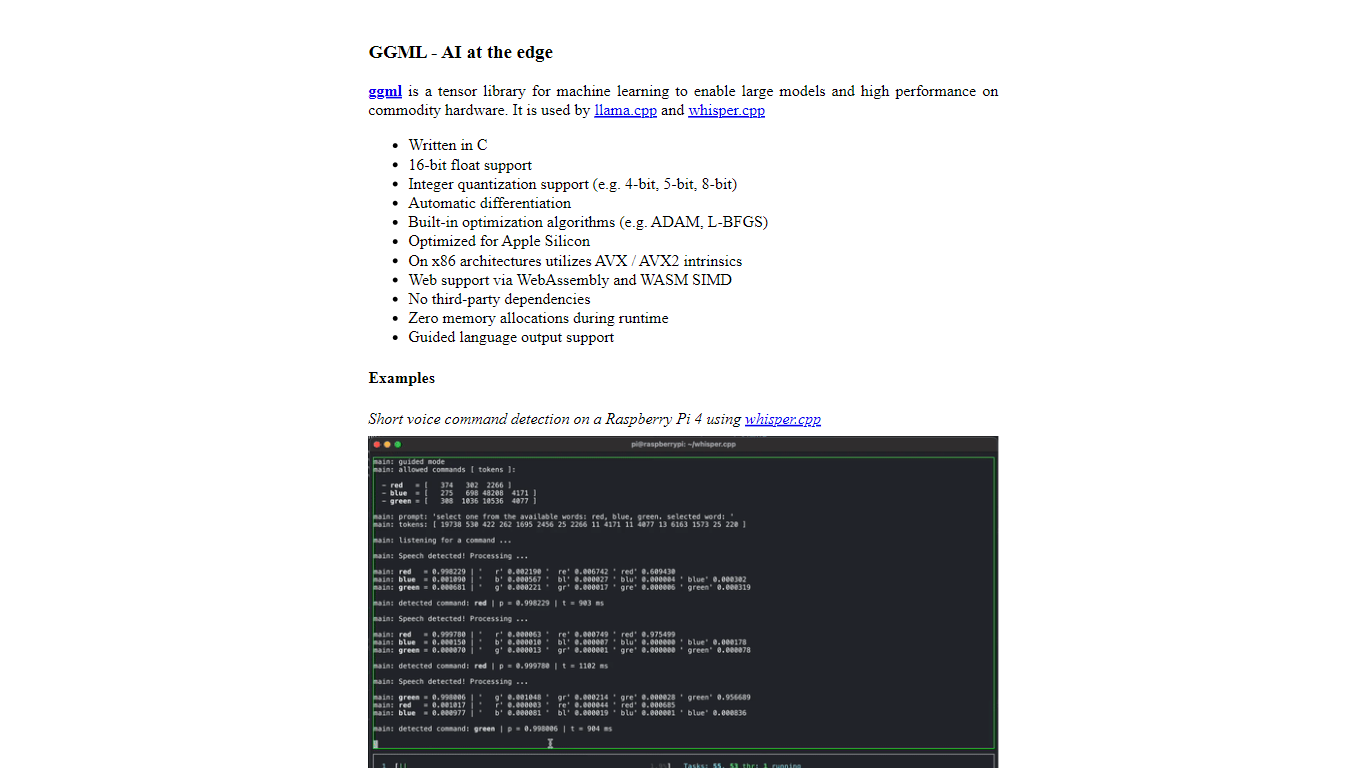

Projects like whisper.cpp and llama.cpp demonstrate the high-performance inference capabilities of ggml.ai, with whisper.cpp providing speech-to-text solutions and llama.cpp focusing on efficient inference of Meta's LLaMA large language model. Moreover, the company welcomes contributions to its codebase and supports an open-core development model through the MIT license. As ggml.ai continues to expand, it seeks talented full-time developers with a shared vision for on-device inference to join their team.

Designed to push the envelope of AI at the edge, ggml.ai is a testament to the spirit of play and innovation in the AI community.

Written in C: Ensures high performance and compatibility across a range of platforms.

Optimization for Apple Silicon: Delivers efficient processing and lower latency on Apple devices.

Support for WebAssembly and WASM SIMD: Facilitates web applications to utilize machine learning capabilities.

No Third-Party Dependencies: Makes for an uncluttered codebase and convenient deployment.

Guided Language Output Support: Enhances human-computer interaction with more intuitive AI-generated responses.

What is ggml.ai?

ggml.ai is a tensor library for machine learning that facilitates the use of large models and high-performance computations on commodity hardware.

Is ggml.ai optimized for any specific hardware?

It is designed with optimization for Apple Silicon in mind but also supports x86 architectures using AVX/AVX2 intrinsics.

What projects are associated with ggml.ai?

The ggml.ai projects include whisper.cpp for high-performance inference of OpenAI's Whisper model and llama.cpp for inference of Meta's LLaMA model.

Can I contribute to ggml.ai?

Yes, individuals and entities can contribute to ggml.ai by either expanding the codebase or financially sponsoring the contributors.

How can I contact ggml.ai for business inquiries or contributions?

Business inquiries should be directed to [email protected], and individuals interested in contributing or seeking employment should contact [email protected].