BenchLLM vs ggml.ai

In the clash of BenchLLM vs ggml.ai, which AI Large Language Model (LLM) tool emerges victorious? We assess reviews, pricing, alternatives, features, upvotes, and more.

If you had to choose between BenchLLM and ggml.ai, which one would you go for?

Let's take a closer look at BenchLLM and ggml.ai, both of which are AI-driven large language model (llm) tools, and see what sets them apart. The upvote count reveals a draw, with both tools earning the same number of upvotes. Since other aitools.fyi users could decide the winner, the ball is in your court now to cast your vote and help us determine the winner.

Disagree with the result? Upvote your favorite tool and help it win!

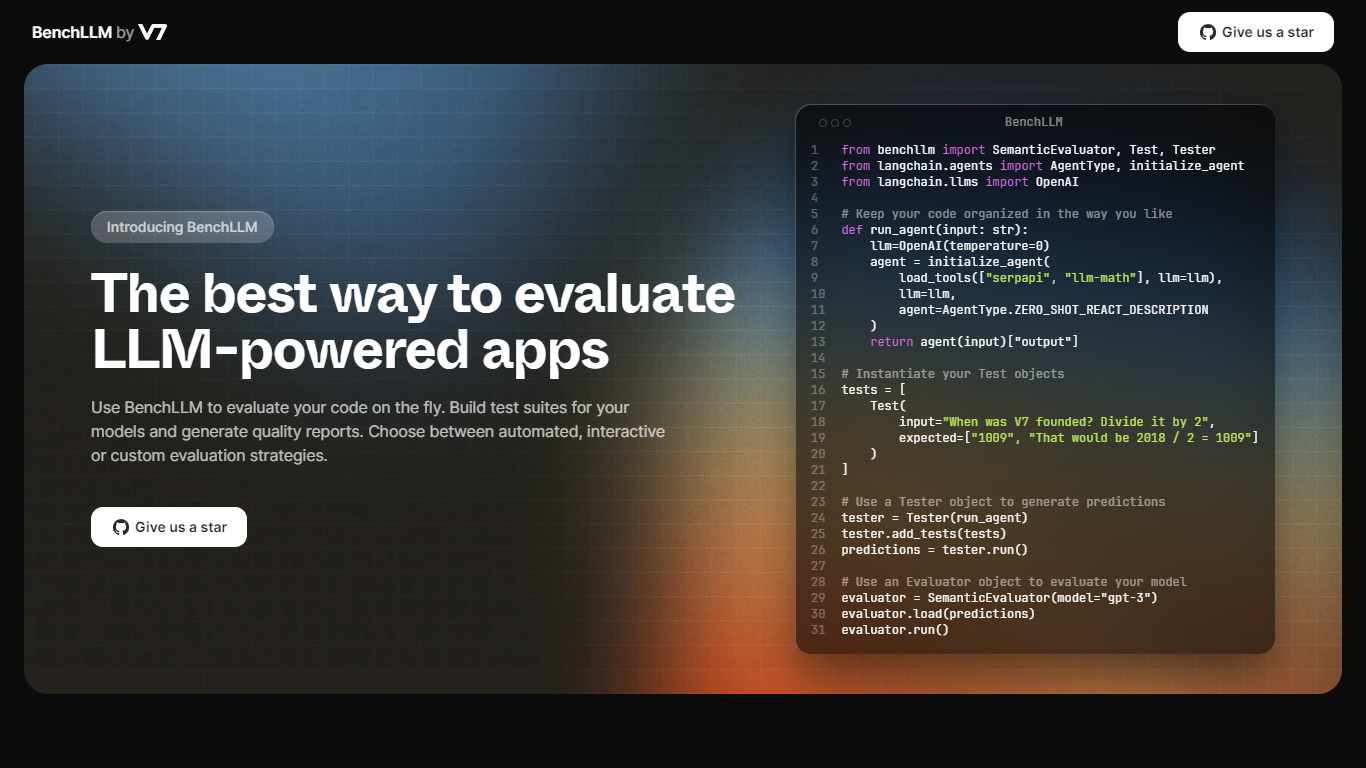

BenchLLM

What is BenchLLM ?

BenchLLM provides a comprehensive solution for evaluating AI-powered applications that use Large Language Models (LLMs). It offers a platform for developers to quickly assess their models by building test suites and generating detailed quality reports.

Whether you prefer automated, interactive, or custom evaluation strategies, BenchLLM caters to diverse testing needs. The toolkit ensures that users can keep their code well-organized and tailor their tests to specific requirements.

The powerful command-line interface (CLI) is ideal for integrating into CI/CD pipelines to monitor model performance and detect any regressions in a production environment.

BenchLLM supports a wide range of APIs, including OpenAI and Langchain, and promotes an intuitive test definition process using JSON or YAML formats. Designed by a team of AI engineers, BenchLLM is an open, flexible tool crafted to fulfill the needs of a seamless and predictable LLM evaluation experience.

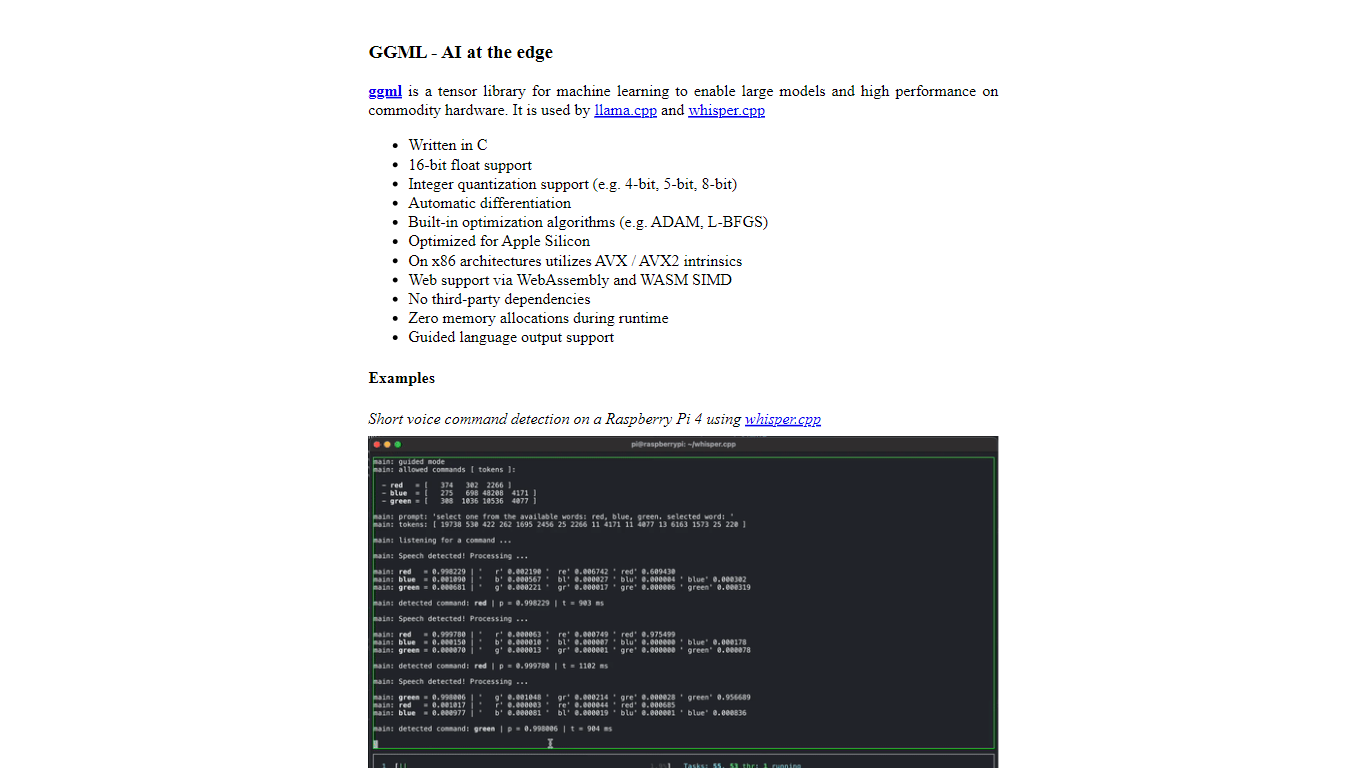

ggml.ai

What is ggml.ai?

ggml.ai is at the forefront of AI technology, bringing powerful machine learning capabilities directly to the edge with its innovative tensor library. Built for large model support and high performance on common hardware platforms, ggml.ai enables developers to implement advanced AI algorithms without the need for specialized equipment. The platform, written in the efficient C programming language, offers 16-bit float and integer quantization support, along with automatic differentiation and various built-in optimization algorithms like ADAM and L-BFGS. It boasts optimized performance for Apple Silicon and leverages AVX/AVX2 intrinsics on x86 architectures. Web-based applications can also exploit its capabilities via WebAssembly and WASM SIMD support. With its zero runtime memory allocations and absence of third-party dependencies, ggml.ai presents a minimal and efficient solution for on-device inference.

Projects like whisper.cpp and llama.cpp demonstrate the high-performance inference capabilities of ggml.ai, with whisper.cpp providing speech-to-text solutions and llama.cpp focusing on efficient inference of Meta's LLaMA large language model. Moreover, the company welcomes contributions to its codebase and supports an open-core development model through the MIT license. As ggml.ai continues to expand, it seeks talented full-time developers with a shared vision for on-device inference to join their team.

Designed to push the envelope of AI at the edge, ggml.ai is a testament to the spirit of play and innovation in the AI community.

BenchLLM Upvotes

ggml.ai Upvotes

BenchLLM Top Features

Automated Evaluation: Automated strategies for evaluating AI models on demand.

Interactive and Custom Testing: Options for interactive or custom evaluation approaches, catering to different development preferences.

Powerful CLI for Monitoring: A user-friendly command-line interface that integrates with CI/CD pipelines for continuous performance monitoring.

Flexible API Support: Compatibility with various APIs like OpenAI and Langchain out of the box, facilitating diverse test scenarios.

Intuitive Test Definition: Easy definition and organization of tests in JSON or YAML formats to streamline the evaluation process.

ggml.ai Top Features

Written in C: Ensures high performance and compatibility across a range of platforms.

Optimization for Apple Silicon: Delivers efficient processing and lower latency on Apple devices.

Support for WebAssembly and WASM SIMD: Facilitates web applications to utilize machine learning capabilities.

No Third-Party Dependencies: Makes for an uncluttered codebase and convenient deployment.

Guided Language Output Support: Enhances human-computer interaction with more intuitive AI-generated responses.

BenchLLM Category

- Large Language Model (LLM)

ggml.ai Category

- Large Language Model (LLM)

BenchLLM Pricing Type

- Freemium

ggml.ai Pricing Type

- Freemium