Last updated 10-23-2025

Category:

Reviews:

Join thousands of AI enthusiasts in the World of AI!

BenchLLM

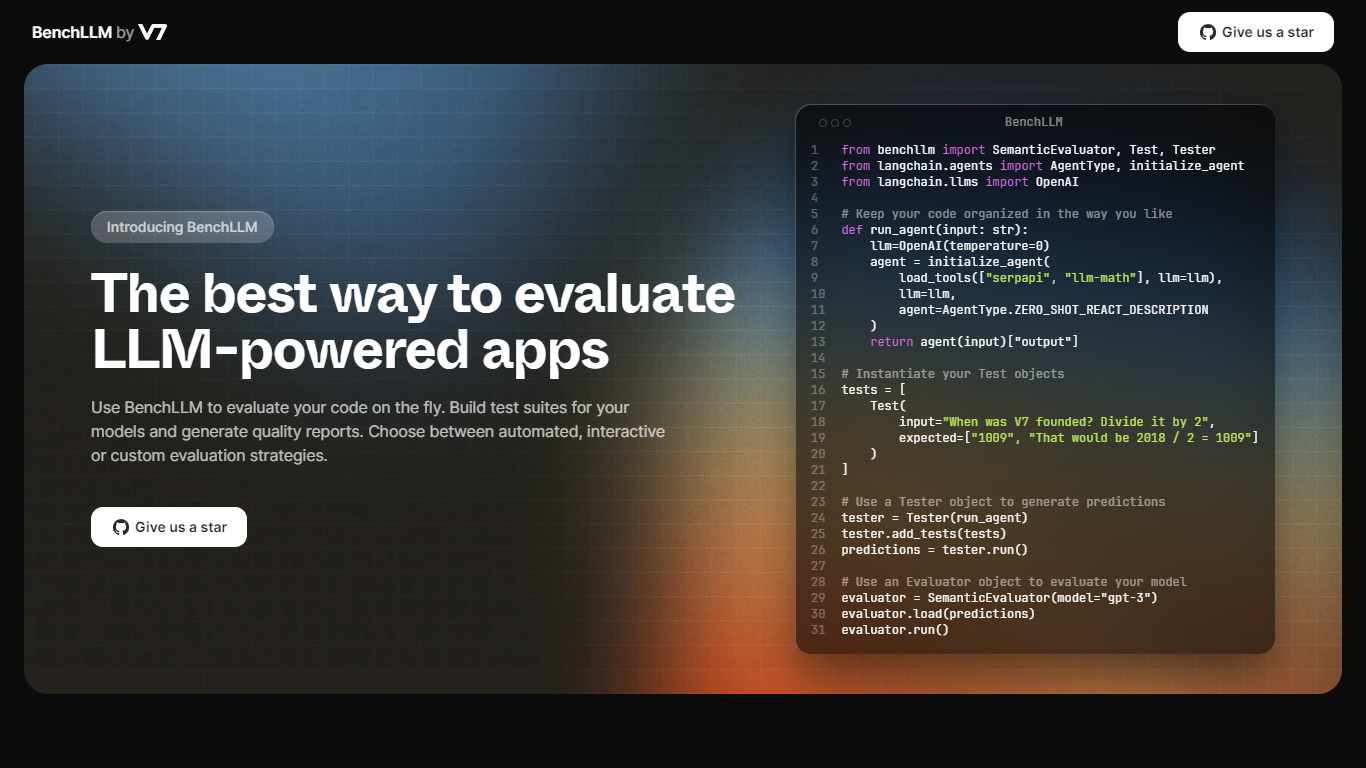

BenchLLM provides a comprehensive solution for evaluating AI-powered applications that use Large Language Models (LLMs). It offers a platform for developers to quickly assess their models by building test suites and generating detailed quality reports.

Whether you prefer automated, interactive, or custom evaluation strategies, BenchLLM caters to diverse testing needs. The toolkit ensures that users can keep their code well-organized and tailor their tests to specific requirements.

The powerful command-line interface (CLI) is ideal for integrating into CI/CD pipelines to monitor model performance and detect any regressions in a production environment.

BenchLLM supports a wide range of APIs, including OpenAI and Langchain, and promotes an intuitive test definition process using JSON or YAML formats. Designed by a team of AI engineers, BenchLLM is an open, flexible tool crafted to fulfill the needs of a seamless and predictable LLM evaluation experience.

Automated Evaluation: Automated strategies for evaluating AI models on demand.

Interactive and Custom Testing: Options for interactive or custom evaluation approaches, catering to different development preferences.

Powerful CLI for Monitoring: A user-friendly command-line interface that integrates with CI/CD pipelines for continuous performance monitoring.

Flexible API Support: Compatibility with various APIs like OpenAI and Langchain out of the box, facilitating diverse test scenarios.

Intuitive Test Definition: Easy definition and organization of tests in JSON or YAML formats to streamline the evaluation process.

What is BenchLLM?

BenchLLM is a tool used to evaluate LLM-powered applications by building test suites and generating quality reports.

What kind of evaluation strategies does BenchLLM offer?

Users can choose between automated, interactive, or custom evaluation strategies.

Which APIs does BenchLLM support?

BenchLLM supports popular APIs like OpenAI and Langchain, among others.

Can I organize my tests into suites using BenchLLM?

Yes, you can organize your tests into suites in JSON or YAML format, allowing them to be easily versioned and managed.

Is BenchLLM suitable for monitoring model performance in production?

BenchLLM is specifically designed for monitoring model performance and can be used to detect regressions in production environments.