Last updated 10-23-2025

Category:

Reviews:

Join thousands of AI enthusiasts in the World of AI!

EleutherAI

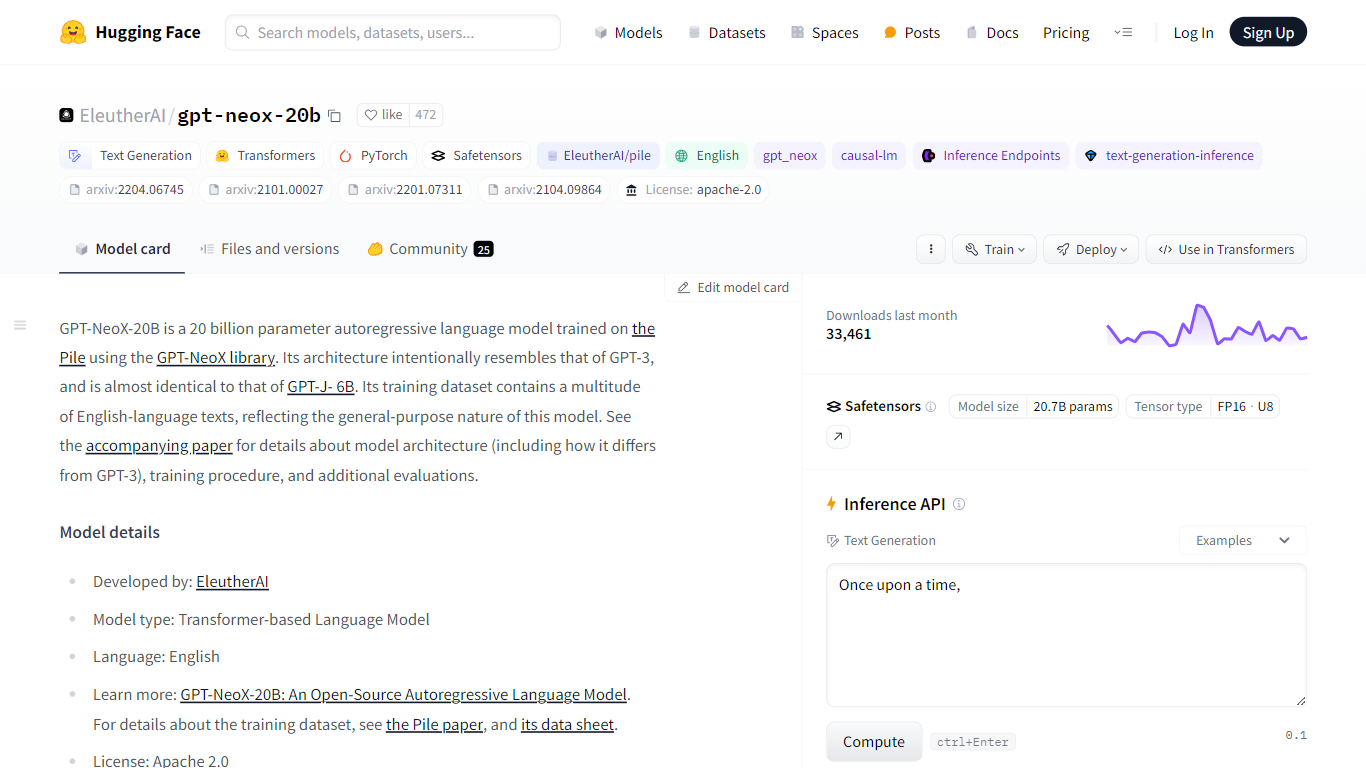

Discover the prowess of EleutherAI's GPT-NeoX-20B, a colossal 20 billion parameter autoregressive language model featured on Hugging Face's platform. This cutting-edge AI model, architected to echo GPT-3, is fine-tuned for English text generation using the diverse and extensive Pile dataset. GPT-NeoX-20B is part of an open-source drive to push the boundaries and make artificial intelligence more accessible. Suitable for researchers and developers, it offers an exceptional starting point for various NLP tasks, but with a mindful note on its limitations and biases that users need to consider. With a commitment to open science, it comes with a user-friendly Apache 2.0 license, opening doors for innovation in ethical, scientific, and research domains.

Model Size: A 20 billion parameter model providing robust text generation capabilities.

Training Dataset: Utilizes the diverse Pile dataset specifically curated for training large language models.

Open Science: A commitment to democratizing AI through open-source availability and an open-science approach.

Model Accessibility: Easy integration with the Transformers library for extended functionalities.

Community Support: Offers community engagement and support through channels like EleutherAI Discord.

What is the purpose of the GPT-NeoX-20B model?

The GPT-NeoX-20B model is developed by EleutherAI and is designed primarily for research purposes. It can also be fine-tuned and adapted for deployment in accordance with its Apache 2.0 license.

Can GPT-NeoX-20B be used as-is for customer-facing products?

GPT-NeoX-20B is not intended for deployment as-is and should not be used for unsupervised human-facing interactions. It is for research and requires further fine-tuning for specific downstream tasks.

Was GPT-NeoX-20B trained on a variety of text sources?

Yes, GPT-NeoX-20B was trained using the Pile dataset, which contains a wide range of English-language texts from numerous sources.

How can I use the GPT-NeoX-20B model for text generation?

You can use GPT-NeoX-20B by loading it with the AutoModelForCausalLM functionality from the Transformers library.

What is the Pile dataset?

The Pile is a large-scale, English-language dataset featuring 22 diverse sources and is known for its extensive content variety, used in training GPT-NeoX-20B.